Solopreneurs build with friction. What starts as a pragmatic stack of point solutions—calendar apps, CRMs, task boards, an LLM UI—soon becomes a brittle web of logins, context switches, and undocumented glue scripts. Autonomous ai system tools are not another SaaS to add to the pile; they are a shift in execution architecture. This article is a practical, systems-level analysis of what that shift looks like in code-free terms: category definition, core architecture, deployment and scaling trade-offs, and the operational reality for a one-person company.

What I mean by autonomous ai system tools

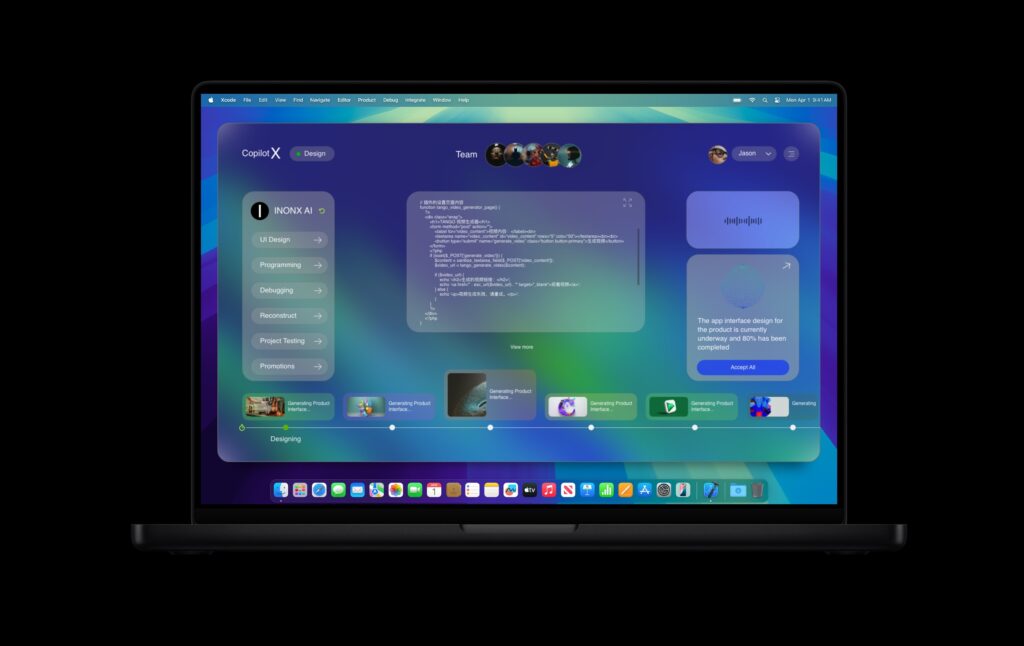

Use the phrase as a system lens rather than a product label. An autonomous ai system tool combines three properties:

- Persistent operational state: it keeps and reasons over multi-session context beyond the immediate prompt.

- Orchestration authority: it composes actions across services and agents, not just returns text.

- Autonomous execution with human governance: it can act on behalf of the operator while exposing safe control points.

Viewed this way, the distinction between tool stacking and an AI operating system is structural. A stack of tools optimizes for interface convenience. An autonomous system optimizes for durable capability: context continuity, failure modes, cost–latency balance, and the ability to compound behavior over months and years.

Architectural model

Break the architecture into four layers. Each has trade-offs that matter at solo scale.

1. Memory and context layer

Designing a memory model is the first architectural decision. Do you keep everything in a single vector store, or partition memory by project, client, or recency? The right model for a one-person company tends to be hybrid: short-term session cache for low-latency reasoning, a mid-term persistent context store for active projects, and a long-term archival index for historical patterns.

Key trade-offs:

- Consistency vs cost: Cold retrieval from long-term archives is cheaper but slower; keeping many vectors hot increases expense.

- Relevance vs noise: Aggressive summarization reduces noise but can lose nuance; conservative retention increases downstream reasoning cost.

2. Orchestration and agent layer

This is the execution core. Two patterns dominate: centralized conductor and distributed micro-agents.

- Centralized conductor: a single orchestration engine holds the workflow graph and calls services. Simpler for debugging and accountability; easier to enforce transactional behavior and human approvals.

- Distributed micro-agents: autonomous, specialized agents operate semi-independently and communicate via messages. Better parallelism and modularity but introduces state synchronization challenges and eventual consistency headaches.

For solo operators, the centralized conductor usually wins early: it minimizes cognitive load and operational surface area. Transition to distributed agents only when concurrency or specialization demands outstrip the conductor’s complexity.

3. Connector and capability layer

This layer encapsulates side effects: sending email, creating invoices, updating a database, or running a paid ad. Treat each connector as an idempotent, explicitly versioned capability. Failure in a connector should never silently mutate system state.

Design considerations:

- Idempotency keys and compensating transactions

- Clear surface for consent: what can be executed autonomously versus what requires an explicit confirm

- Rate limiting and cost quotas to avoid runaway bills

4. Human governance and interface layer

Autonomy without governance is risk. The operator needs lightweight, contextual controls: approve once per session, roll back an action, or set a soft budget. Interfaces should not be flashy. They should present a concise decision surface tied to state locations in the memory model and logs in the conductor.

Deployment structure and operational patterns

How do you deploy this for a one-person company so it remains manageable?

Minimal viable deployment

- Single conductor instance with a clear task queue and a session cache.

- Persistent context store with project partitions and automated summarizers that run nightly.

- Two policy gates: soft approvals (notification with one-click confirm) and hard approvals (manual step required).

Scaling out

Scale by tier, not by adding disparate tools. Common scale levers:

- Shard memory by client or product so reasoning cost is linear, not combinatorial.

- Introduce specialized micro-agents for high-volume tasks—billing, reporting—while keeping central conductor for flow control.

- Cache commonly used reasoning outputs and only re-run expensive chains when inputs materially change.

These decisions keep the system from collapsing into a sea of one-off automations that don’t compound.

Failure modes and recovery

Autonomy introduces new failures beyond service outages: context drift, overgeneralized policies, and emergent behavior across connectors. Plan for them.

- Fail-safe defaults: when in doubt, pause and notify instead of acting.

- Explicit compensation paths: if a connector runs a harmful action, have an automated rollback or an audit trail to revert changes.

- Versioned decision policies: you must be able to roll back to a prior policy that produced acceptable behavior.

Operational resilience is not about eliminating errors. It’s about making errors visible, bounded, and reversible.

Cost, latency, and the trade-offs that matter

Two economic realities are constant: compute costs and human attention. Design to minimize both where possible.

Practical levers:

- Distinguish between planning and acting. Use cheaper models for internal planning and smaller-context summarization, reserve larger models for final decisions and high-impact actions.

- Batch side-effects. Group non-urgent API calls into scheduled windows to reduce per-call overhead and rate limit issues.

- Meter actions, not prompts. Charging operators mentally (and financially) per action reduces overautomation.

Why tool stacks collapse and why systems persist

Stacks of point tools fail to compound because they optimize for immediate completion, not persistent state. Each tool serializes context in a different format, lacks consistent identity for entities, and demands manual reconciliation. The maintenance cost grows quadratically with the number of integrations.

An AI operating system approach—where autonomous ai system tools form the execution substrate—solves that by centralizing identity, memory, and policy. That centralization has costs: you accept more upfront complexity and responsibility. But it composes: behaviors, once encoded and refined, improve future outcomes without manual reconfiguration.

Real solo operator scenarios

Scenario 1: Client onboarding

Problem: A solopreneur spends hours onboarding clients—collecting documents, setting expectations, creating accounts. Point tools create repeated manual steps.

System solution: The conductor runs a templated flow. Memory attaches onboarding artifacts to the client partition. Connectors create accounts with idempotency; soft approvals surface high-risk actions (billing configuration). Nightly summarizers produce a one-page onboarding brief for the operator, which reduces meetings and increases consistency.

Scenario 2: Content production and distribution

Problem: Multiple content ideas, fragmented briefs across notes and chats, inconsistent brand voice.

System solution: The persistent context maintains a brand voice vector and canonical style guide. An autonomous creative agent drafts, the conductor queues distribution, and the operator reviews a compact diff rather than reauthoring each asset. The system learns preference via approvals and gradually reduces human intervention where reliability is high.

Human-in-the-loop design

Human control is not the absence of autonomy; it’s an operational axis. The interface must expose why a decision was recommended: source memories, recent policy changes, connector status. For engineers, this means designing explainable traces and retention of decision artifacts. For operators, this means trust.

Two governance patterns:

- Confidence thresholds that translate model certainty into execution modes (suggest, queue, run).

- Policy rehearsals that simulate outcomes and surface potential conflicts before execution.

Long-term implications

For operators and investors, the key idea is compounding capability. Point tools produce ephemeral productivity gains. Autonomous systems, when well-engineered, produce structural leverage: a single policy change can improve outcomes across customers, content, and cash flow.

For engineers and architects, this is a call to design for statefulness, visibility, and gradual autonomy. Build reversible actions, version policies, and keep memory modular. For solopreneurs, the ask is discipline: accept a small amount of upfront engineering and governance work to unlock months or years of reduced routine overhead.

Practical Takeaways

- Think of autonomous ai system tools as an execution substrate not an add-on. Centralize identity, memory, and policy to avoid exponential glue.

- Start simple: a centralized conductor plus a partitioned memory model will cover most solo operator needs.

- Design connectors for idempotency and explicit rollback. Human oversight should be lightweight but decisive.

- Control cost by separating planning and acting, batching side effects, and metering actions.

- Adopt an AI operating system mindset: prioritize durable capability over transient convenience. When done right, the system becomes a compound engine for your work—an engine for ai startup assistant and a realistic workspace for ai business partner—rather than another task on your to-do list.