Introduction: an operational lens

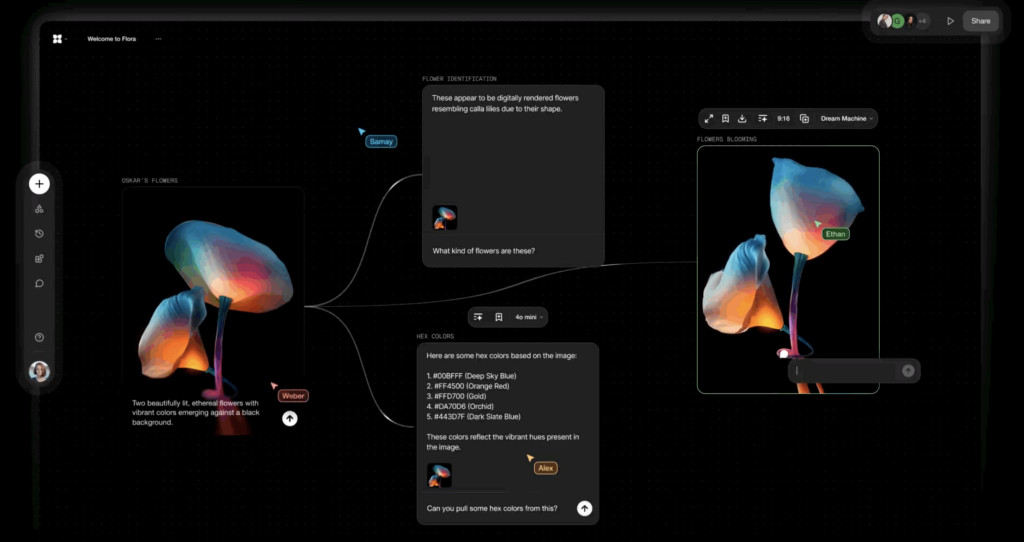

For a solo operator the difference between tools and systems is not semantics — it is survival. Small teams and one-person companies cannot treat AI as a widget to add to a stack; they need infrastructure that compounds. An ai operating system app is the design pattern that converts intermittent automations into a durable, composable digital workforce.

Why stacked SaaS breaks down

Most solopreneurs begin by combining best-of-breed tools: calendaring, email automation, a design editor, a CRM, and a handful of point AI services. Early on this feels efficient. After a few months the gaps appear: identity and context are duplicated, actions are not atomic across systems, and simple changes cascade into manual recovery work. The operational cost is cognitive load plus time spent firefighting.

- Fragmented context: each tool stores its own state. Rehydrating what happened across a customer lifecycle becomes manual and brittle.

- Non‑composable automations: automations are brittle point integrations. There is no single model of truth to coordinate complex flows.

- Operational debt: one-off scripts and Zapier chains accumulate; debugging them under load is slow and risky.

- No durable capability: improvements rarely compound. A nicer prompt or a new API call boosts one workflow, but system-level leverage is missing.

Category definition: what an ai operating system app is

An ai operating system app is not a wrapper around LLMs. It is an execution substrate that treats AI as a structured organizational layer—agents, memory, planners, connectors, and observability—glued by a runtime that enforces state, identity, and recovery semantics. It emphasizes durable capability (what you can do reliably) over novelty (what you can do once).

Core responsibilities

- Context persistence and memory: keep a single, searchable account of interactions and artifacts.

- Orchestration: schedule, route, and coordinate multiple agents and external systems.

- Execution safety: idempotency, retries, rollbacks and human escalation points.

- Observability and auditability: trace every automated decision back to data and rules.

Architectural model

Keep the architecture pragmatic: an ingest layer, a memory and state plane, an orchestration core, agents as pluggable workers, and connectors to external systems. Each layer has trade-offs that matter to a solo operator.

Memory and context persistence

Memory is not just a vector database. Treat memory as tiered: working memory (recent events, short context windows), episodic memory (transactions and decisions), and semantic memory (profiles, preferences, policy). Use immutable event logs for auditability and versioned snapshots for fast rehydration. This lets agents reconstruct context cheaply and accurately.

Orchestration: centralized vs distributed

There are two pragmatic models.

- Centralized coordinator: a single planner determines flows and issues tasks to stateless agents. Pros: easier global reasoning, simpler failure recovery. Cons: a single control plane can be a bottleneck and single point of failure.

- Distributed agents with protocols: each agent holds autonomy and communicates via a task bus. Pros: resilience and reduced latency. Cons: harder to reason about global state and harder recovery semantics.

For most one-person companies, start with a centralized coordinator that can later evolve toward distributed patterns for high-concurrency paths.

Connectors and identity

Integrations must carry identity and provenance. OAuth and scoped service tokens are table stakes, but you must also standardize event formats and error semantics. A connector abstraction should implement retries, rate-limit backoff, and safe-mode operations when external APIs change.

Operator implementation playbook

This is a playbook for building an ai operating system app with a focus on delivering durable capability quickly and safely.

Step 1: map your core workflows

Identify 3–5 highest-value workflows you repeat weekly. Example: onboarding a new client, publishing a paid newsletter, or running a consultative sales call. Break each workflow into decision points, data inputs, side effects, and failure modes. If a task is done fewer than twice a month, defer automation.

Step 2: define agent roles and boundaries

Agents should be purpose-driven and stateless wherever possible. Define clear APIs for each agent (inputs, outputs, expected side effects). Keep human approvals explicitly modeled as state transitions rather than ad hoc pauses.

Step 3: design the memory model

Capture events and artifacts so any agent can rehydrate the context. Use a single source-of-truth record per customer or project. Keep expensive semantic retrieval cached and tuned; keep working memory lean for latency-sensitive tasks.

Step 4: build the orchestrator

The orchestrator coordinates tasks, enforces idempotency keys, and records all transitions. Start with synchronous flows for user-facing operations and use durable task queues for background work. Ensure each task is time-boxed and compensatable.

Step 5: safety, observability, and manual checkpoints

Add human-in-the-loop checkpoints at high-risk decisions: contractual language, billing changes, or public-facing content. Log inputs and decisions. Expose a replay mechanism so you can re-run a flow with modified parameters to diagnose failures.

Scaling constraints and trade-offs

Scaling for a one-person company is not about millions of users; it is about keeping cognitive and operational load manageable while throughput grows. Key trade-offs:

- Cost vs latency: synchronous LLM calls are expensive and slow. Batch and async where possible; use smaller models for routing decisions and reserve bigger models for content creation.

- State fidelity vs storage cost: store recent full snapshots and archive raw logs. Rehydrate from logs only when needed.

- Central control vs speed: centralized orchestration simplifies reliability but creates bottlenecks. Defer decentralization until you have clear hotspots.

Operational failure modes and recovery

Focus on five practical patterns.

- Idempotency keys: prevent duplicate side effects when tasks are retried.

- Compensating transactions: design reverse actions for destructive operations rather than trusting perfect execution.

- Backoff and circuit breakers: put limits on external APIs to avoid cascading failures.

- Replayable audit logs: keep immutable logs that let you reconstruct state and replay flows after bug fixes.

- Human escalation paths: surface a single action to pause, correct, and retry flows with minimal context loss.

Human-in-the-loop and UX considerations

The point of an ai operating system app is to reduce toil, not to remove human judgment. Make approvals lightweight: summarize the decision, present the minimal data needed to decide, and provide a one-click accept/rollback flow. Treat notifications as control signals, not logs; each notification should have a clear next action.

Deployment and tech choices

For a solo operator, prefer managed primitives that reduce ops burden: hosted vector DBs, serverless functions, a reliable task queue, and a simple event bus. Keep secrets, tokens, and connectors in a minimal trusted vault. Structure deployments to allow local simulation and replay without giving developers access to production keys.

Why this compounds where tools don’t

A well-designed ai operating system app turns discrete automations into a platform: adding a new agent or connector increases the system’s reach across many workflows. That compounding effect is the difference between a tool that saves an hour and a system that multiplies the operator’s effective headcount.

Contrast that with a suite for ai automation os built from point products: each product demands onboarding, separate billing, disjointed logs, and bespoke error handling. The marginal cost of integrating another tool is high, and improvements rarely generalize across workflows.

Real-world example

Consider a one-person digital agency that sells three services: strategy calls, a newsletter, and a small consulting product. A traditional stack uses five SaaS services plus custom scripts. An ai operating system app replaces brittle chains with agent-defined flows: intake agent, qualification agent, billing agent, content agent, and delivery agent. The agency reduces handoffs, gains consistent records, and can add new services by wiring new agent roles instead of building custom automations every time.

What This Means for Operators

For builders and solo operators, shift focus from buying features to owning flow. An ai startup assistant app or a bespoke connector matters less than whether your system has predictable recovery, clear identity, and a memory model you can query. Build to compounding capability: the ability to reuse agents, reuse memory artifacts, and increase effective throughput without proportional cognitive load.

Practical Takeaways

- Design for auditability first. Runbooks and replayable logs pay dividends.

- Start centralized. Centralized orchestration reduces early complexity and makes debugging tractable.

- Tier memory. Keep fast working memory separate from archival logs.

- Model human approvals as state transitions. Make them cheap and reversible.

- Prioritize compounding over point automation. One reusable agent can replace dozens of brittle scripts.

AI is useful when it becomes infrastructure. For solo operators, that means building systems that reduce uncertainty and scale cognitive capacity — not chasing the next surface-level integration.